Note: This project was confidential, so I have omitted and changed some details.

Goal

A large technology company had designed a simplified application for tracking sales opportunities and launched it to a small group of pilot users. They wanted to measure its usability and get user feedback to decide whether any design improvements were needed before launching it to all of their salespeople.

Approach

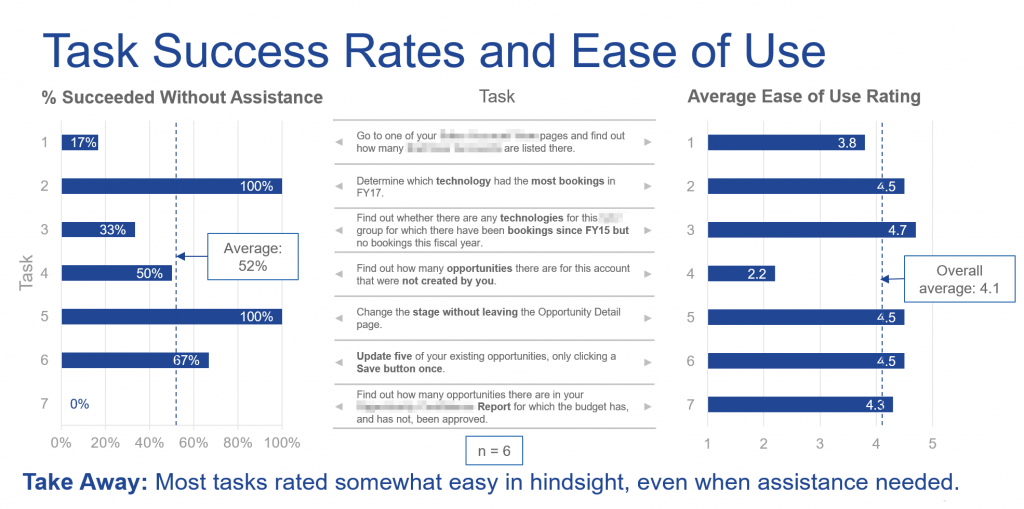

- I conducted six individual sessions via webconferencing. A salesperson from the pilot group shared their screen while attempting a realistic sequence of seven tasks. (With usability testing, a small number of sessions is usually sufficient to detect any major usability problems.)

- To obtain both objective and subjective usability data, for each task I privately noted any instances in which the user made errors or required my assistance, and asked the user to rate how easy or difficult they found it to complete the task.

- I asked several rating questions, with comments, about the simplified application, including whether it did a better job supporting their work than the previous version, whether users would need training to use it effectively, and whether they felt it was ready to use.

Results

Average ease of use ratings across tasks, and overall ratings and comments, indicated that the simplified application did a better job at supporting the salespeoples’ jobs than the previous version and had sufficient usability to be ready to launch to all salespeople.

However, the usability test sessions also showed that the design of the application had room for improvement. Several specific opportunities to improve usability were identified. For example, 4 of 6 users received an error message because the presence of a new required field was insufficiently obvious.

Impact

The company was able to make a well-informed decision to launch the simplified application to all of their salespeople, while also prioritizing several specific usability improvements for the next release of the application.